"It's not a perfect system" - my thoughts on changes to Reuters' commenting policy

I've previously made some of my views on newspaper website comments pretty clear - I miss them when they aren't there, and I don't think forcing people to use their real names is a way to improve their value to a news organisation. The Reuters decision to change their commenting policy has been seen as another step towards news sites "civilising" the debates that appear under stories. A few things about it stood out for me.

An end to blanket pre-moderation

Whilst a lot of attention was focused on the idea of encouraging people to be more open with their identity, one of the key shifts for Reuters is a move away from blanket pre-moderation.

"Until recently, our moderation process involved editors going through a basket of all incoming comments, publishing the ones that met our standards and blocking the others."

Pre-moderating every single comment by every single user on every single thread is intimidatingly expensive. Reactive moderation, where appropriate, is always a much more cost effective way of moderating a large news community, even it does carry more risk.

Getting the game mechanics right

Introducing new features to an existing community online can be disruptive and have unintended consequences. Richard Baum at Reuters states that:

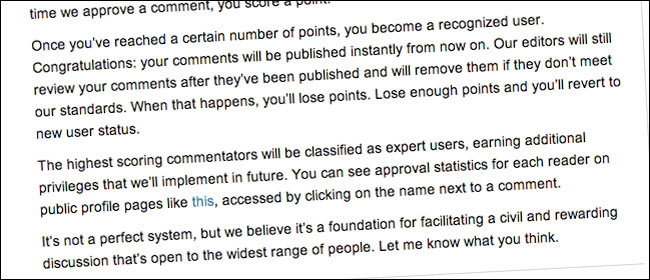

"Your comments go through the same moderation process as before, but every time we approve a comment, you score a point. Once you've reached a certain number of points, you become a recognized user.

...

The highest scoring commentators will be classified as expert users, earning additional privileges that we'll implement in future"

The risk here is that if you simply reward prolific commenting, you encourage an army of people posting "Me too", "Great post" and "I agree" just to bolster their comment count. When thinking about how users earn extra features or privilege, you also need to concentrate on metrics that reward quality not quantity.

Exposing moderation records

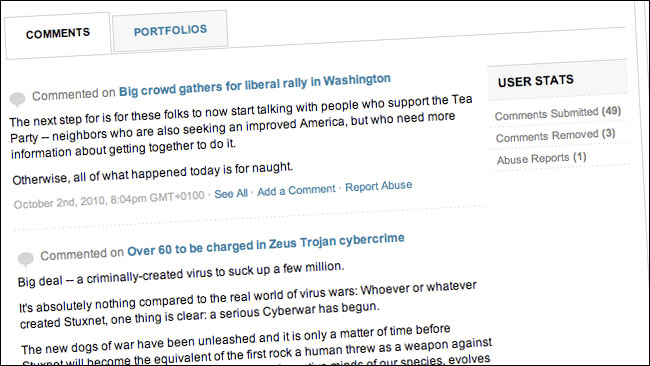

Reuters have taken the step of exposing user's moderation history. On a profile it will now tell you how many comments a user has submitted and how many have been rejected.

I presume this has been implemented to encourage users to think twice about submitting trashy comments, in the knowledge that it will appear on their public record.

I do worry, though, that they've rather left themselves as hostages to fortune with this feature. It surely won't be long until we get the first blog posts saying 'I looked at all the user profiles of the people commenting on this thread about global warming, and I can PROVE that Reuters rejects 12% of comments by users who do not support the myth of man-made climate change, but only rejects 4% of postings by ecofascists. Therefore it is PROVEN that Reuters is run by the lizard people of the Illuminati'.

Who the hell is Rudy Haugeneder anyway?

One odd decision made in the announcement was to link to a real user's profile.

I've no idea who Rudy Haugeneder is.

Actually, that isn't true.

It took me about 2 minutes to uncover that he appears to be someone who regularly comments on a variety of news sites, including the New York Times and BBC, with a fairly consistent online identity. He appears to come from Victoria, Canada, and it looks like he sometimes used to write for a newspaper there.

What I'm not clear about is why Reuters decided to throw a spotlight on him personally.

Sure, his profile is public on the site, but I always think that if you are showing off new community features, it is best to either use mock-up images, or use a member of staff's profile as the example you show. It seems a bit weird to pick one member out of a large community site and say 'look at this guy whilst you check out our new features'.

"It's not a perfect system"

Richard Baum signs off his blog post writing:

"It's not a perfect system, but we believe it's a foundation for facilitating a civil and rewarding discussion that's open to the widest range of people"

He's right, but then I'm yet to see a perfect system.

The fact is that it is very easy to troll and be abusive and disruptive within a community, and hard to design a system that promotes free debate whilst minimising the more unhelpful contributions. This isn't just true of news sites, but for any space where community interaction takes place. For all of us on the web*, commenting remains a work in progress.

* You only have to see the mostly SEO-driven drivel that gets left on this blog as 'comment' to see that news isn't the only area with a quality issue.

Suw Charman-Anderson also has a blog post today on this topic. I particularly like the line: "If you are ashamed of what your comments collected say about you, perhaps you ought to think a bit more about what you say."

i personally dont see why it is necessary to identify ones self