What would the LA Times' datajournalism about teachers look like in the UK?

Last week I was in Berlin for a meet-up around the themes of open data and datajournalism, and one of the speakers was Eric Ulken. He had worked on the LA Times 'datadesk', a loose affiliation of 'computer assisted reporters', investigative reporters and members of the interactive technology and graphics teams. They worked on projects like the 'Homicide blog' and the maps that accompanied it, which came out of a single reporter's dogged perseverance in blogging every single homicide that occurred in L.A. County.

Ulken said that reaction to that particular blog and the map attached to it had differed in Europe to the reaction in States.

Firstly, and rather grimly, he pointed out that some of the reaction in the US was to consider the level of homicides 'business as usual'. As the project was noticed around the world, he found that Europeans had a concern about the privacy implications that didn't factor into discussions in the US. Reporting the location of crimes and race of murder victims was commonplace in the States, but some people in Europe thought this was unnecessarily obtrusive.

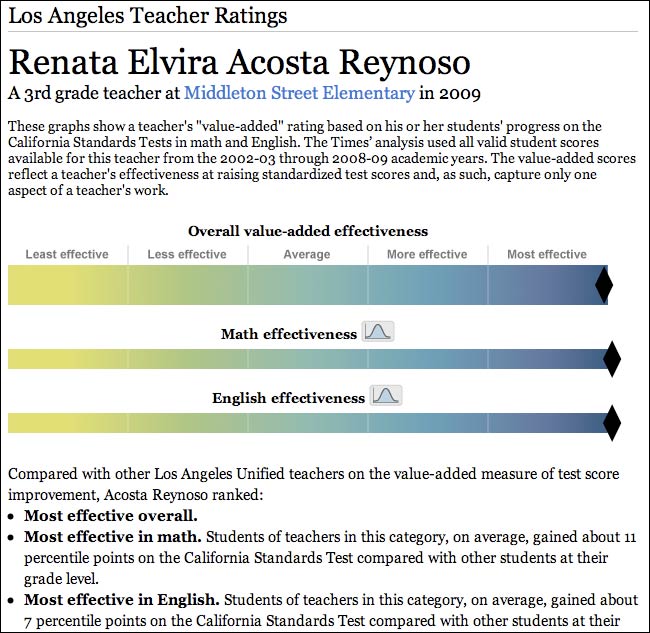

If the 'Homicide blog' made Europeans feel uneasy about privacy issues, Eric then topped that by showing the L.A. Times project to asses individual named teachers within the public education system.

This sparked the longest interjection into anybody's talk in Berlin, as the ethics were debated. The tool uses data from standardised test scores to work out whether individual classes have achieved above or below what was expected of them, using that to consequently grade the teachers.

Some of the issues raised by the data meetup group were around whether the public has a right to know the effectiveness of individual named employees, even if they are public employees. Another issue was that the test score produced numbers, but that the scale displayed to public was qualitative, e.g. 'most effective' or 'least effective'. The teachers were distributed evenly between unsatisfactory and outstanding, but there was also no indicator of whether being 'unsatisfactory' by this measure actually meant you were a bad teacher. If the margin of error was large, and distribution from the mean low, then the results could actually be meaningless.

I got to wondering how this might play out in the UK.

The L.A. Times lists a top 100 teachers by name, but doesn't make a similar list of the bottom 100 available - although browsing through the data would soon uncover poorly rated teachers. I suspected, that given a similar set of data and the willingness to publish, our tabloids might well go at it from the opposite angle - naming and shaming the teachers who are a waste of taxpayers money. I think as a nation we'd be more likely to adopt the tone of the witch-hunt, as we've seen with social workers who have failed children, than try and praise high achievers.

I don't think a similar service would happen here at the moment though. I'm not a media laywer, but I'd think that most of the British press would consider it a legal risk to label a named schoolteacher as 'unsatisfactory' based upon their own interpretation of test results.

But it does beg a big question about datajournalism around schools in the UK.

Whilst we'd probably baulk at rating individual teachers on the test scores achieved by their pupils, we fall over ourselves to rank entire schools, colleges and universities on the same basis. And what is that except an aggregation of the individual effectiveness of the teachers? If we accept that academic institutions can be ranked based on test scores, why shouldn't the public be able to drill down further into that data?

To the bottom line of Your post - we shouldn't do that because academic institutions are not private persons - there is a difference between saying that some school is bad and saying that some individual is bad. Also I think You miss a little detail here - this ranking are public not only to the local people but to the whole wide world. In the age of the Internet it's quite easy to find personal information of a person such as home address (because a lot of people doesn't care about online privacy) and stalk them. Look for example what 4chan users do from time to time. If somebody will get their attention (in the bad meaning) they will literally destroy his or hers life. It would not make somebody to teach better if he or she will get stalking phone calls at 3 AM - and that sort of things can happen because they have happened before.

"what is that except an aggregation of the individual effectiveness of the teachers?"

It's also an aggregation of the pupils receptiveness to the teaching, and a whole pile of institutional factors that have nothing to do with any individual teacher. Allowing a drill down suggests a much stronger causal relation between a teacher's ability and test scores than a careful analysis of the data would show. It's already an oversimplification, and it would encourage over-simplistic thinking in an insensitive and, as Alice says, potentially harmful, way.

Very interesting on the homicide blog. Yes I think that is something Americans would be very interested in and consider common place. However the public employee performance such as teachers. I'd never thought of that, but I like the idea. Our schools are not working in far too many cases and parents armed with specific knowledge can take specific actions.

In my own opinion, teachers do their work and pupils is lack of capability.

Sometimes students didint help theirselves even teachers do their very best to help them.

But totally the overall performance of a students depends on the teacher, the school and to them especially when they are affected with different social problems.

Thanks..