How the Guardian’s custom CMS & API helped take content strategy to a traditional publisher

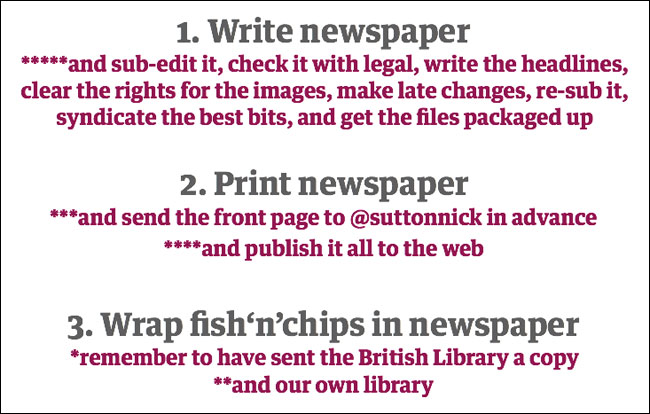

Digital publishing has radically changed many businesses, not least of all the newspaper industry. Take our old content life-cycle, which essentially went something like 1) Write newspaper 2) Print newspaper 3) Wrap fish‘n’chips in the newspaper.

Well, OK, that is a gross over-simplification for comedic effect.

I forgot a couple of key points. At the chipwrapper stage, you have to remember to deposit a copy with a copyright library like the British Library. And with our own library too, since the Guardian is one of the few global newspapers that still has a library with books and researchers and librarians and stuff.

And there are some changes at the print stage too. You have to send the front page in advance to people like @suttonnick who publishes a #tomorrowsnewspaperstoday stream on Twitter. And publish it all to the web.

I’ve also missed out quite a few steps at the writing stage - including commissioning, subbing, layout, scrubbing half the edition because Murdoch has just been hit with a pie in the face and having to start all over, re-subbing it, checking with legal, clearing image rights and so on and so on.

Along the way I casually slipped in the phrase “and publish it all to the web”. And that is what has changed.

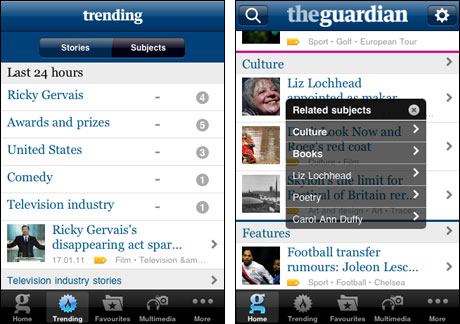

I don’t just mean web, though, do I? Yesterday Karen McGrane referenced “the splinternet” and talked about the proliferation of devices, screen sizes and resolutions that we now publish content to. For the Guardian this means our award-winning iPhone app, our m.guardian mobile optimised website, our desktop site, and the promise of much more to come including an iPad edition, and apps for Android and Windows 7 phones.

The m.guardian.co.uk site.

Not all content can be used everywhere. The refusal of Apple to run Flash on their iOS devices means businesses have been forced to choose between packaging content up in SWFs that can be reliably displayed pretty much anywhere except on the iDevices, or to go down the route of HTML5, which still has patchy and inconsistent browser support. Some interactives on the Guardian website render with a limp one line apology.

It is important to get these things right. Publishing a story on the web that says “(see diagram right)”, when the actual diagram hasn’t made it through the web production process sends out a message to your users that you are publishing for the convenience of your systems, not for the convenience of the format they are trying to view it on.

No longer a “one day” game

One of the biggest changes to hit newspapers is that publishing is no longer a one-off one day event. Content on the web tends to hang around for a lot longer. At the Guardian we still have our Euro2000 site up and running from eleven years ago. I think it is great that this content, and the graphical style of the web that went with it, is still available.

But...it means we still have to keep running some legacy servers and CMS code just to keep those pages available. At some point we have to bite the bullet to migrate or kill the content. And as Lisa Welchman said yesterday: “We all know that web content servers are things that you put stuff on. You never take it off.”

If that longevity provides a new overhead for our content business, then it also provides new opportunities. We have recently launched a series of ebooks called “Guardian Shorts”. The aim is to create short anthologies of news content around topical events, repurposed from the paper, and then sold on Kindles and in the iBookstore for between £1.99 and £3.99. Our first title - “Phone hacking - How the Guardian broke the story” - very quickly made it into the best-selling list of politics books on the Kindle store, and we promote it alongside our coverage of the scandal. It is potentially part of a business model where the long tail value of our content helps to subsidise the creation.

The series also exposes some of the limitations of the news medium as content though. I am editing a couple of titles for the series myself, and I find myself repeatedly scoring out from the manuscript the same establishing paragraphs over and over and over and over again. Written for consumption in the print newspaper, the author has to assume that the reader has little prior knowledge of a person or story, and that they have no reference material to hand. Online, hyperlinks ought to render that repetition unnecessary, providing background information when the individual reader needs it, not the “one-size-fits-all” approach of print.

Shine a light on the dark corners of your website

In the newspaper you would traditionally only have a little bit of “peripheral” content or furniture - a publisher’s imprint, some contact phone numbers and the like. On the web, though, this type of content simply multiplies, and you can end up with a host of help text, FAQs, privacy policies, community standards and the like.

Traditional publishers need to get more of a “web governance” mindset with this content. We do make notes within our CMS about page owners, but this isn’t enforced, and generally the Guardian’s web CMS has a very laid-back approach to permissions and workflow management. We’ve hired bright people, and we trust them to do their jobs, without worrying about writing tens of thousands of pounds worth of software to prevent them doing dumb things.

Tags are magic!

Metadata is really important to us, and I have been very lucky to come to a company where a lot of the hardcore IA has already be done, and done brilliantly. When we built our own CMS we started by locking the software architects and the editors in a room, and not allowing them out until they had agreed on a definition of exactly what a piece of content consisted of and agreed a common vocabulary. OK, maybe we didn’t actually lock them in a room, but using the Domain Driven Design process for the software solved a lot of potential problems for us.

See also: “Domain-Driven Design in an Evolving Architecture” - an article about the development of the Guardian CMS by Mat Wall and Nik Silver

One of these was the evolution of our tagging. Items of content are tagged with their:

- Content type (text, audio, video etc)

- Publication origin (i.e. Guardian, Observer or web)

- Contributor (i.e. author or photographer)

- Section (i.e the main area of the site which they belong to)

- Tone (i.e. a news article, a comment piece, a match report, a review, an obituary)

- Subject (we have around 9,000 topic keyword tags)

Tags determine where an article belongs on the site, and allow it to belong in multiple places. A review of the film “The Damned United” is also placed on the Leeds United page by simply tagging it “Leeds United”. Nobody from the film desk has to talk to the sport desk about the article to get it placed on the relevant page.

We can also combine tags in our URLs. This can be really useful. Combining articles tags with the subject “Books” and the tone “Review” gives us an automatic set of pages listing every book review. Combining the country tag “Japan” with “Natural disasters” gives us a page to gather all the stories about a tsunami, until we decide it is such a big news story that “Japan disaster” deserves a keyword alone.

And we can combine “bullfighting + vuvuzelas” and “chess + boxing” for comedy effect - you can follow @guardian_tags for topical examples as they crop up.

Tags mean we can segment a contributor’s profile by the topics they write about, and we have a tag manager to arbitrate disputes and make sure that our tagging structure makes sense, using tools like a “batch editor” advanced tag search interface, and a daily report of the tags that have been created.

There are a lot more things that we do with our tags. See “Tags are magic!” - a series of blog posts on the Guardian Developer blog by Peter Martin and Martin Belam

If tags are magic, then an API is wizardry

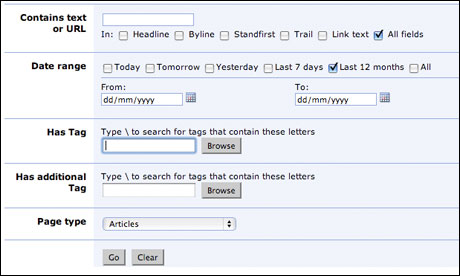

The Guardian made a significant investment in building a content API, but is one that is allowing us to reap rewards from the decision. If you are not familiar with the concept of an Application Programming Interface, then think of it like a giant advance search onto the Guardian’s content - but for machines. By constructing complex queries, apps can retrieve exactly the set of content they require.

There is a human interface too - not the most beautiful it must be said - where you can trial queries and see the resulting output in XML or JSON. With an API key, you can make automated queries to the service. A free tier allows you to freely reuse Guardian content whilst also carrying some of our advertising, and more advanced tiers allow more frequent access on more of a syndication-style basis.

Our content management systems converge on storing our material in a giant Oracle database. We take very frequent copies of that and use that to power the API, which is built on the Solr platform and runs in the cloud. Client libraries for languages like Python, Java and even Perl make it easy for developers to access the content and build apps.

In fact, we use it ourselves to build our own apps. Our iPhone app and m.guardian.co.uk mobile optimised site are powered from the API, as are our forthcoming Android and Windows Phone apps.

Other people use it too, and we are able to fold those applications back into our site - like the Recipe Search which was written by an external developer. It appears on our site, but carries the “What could I cook?” logo too. It is a relationship that works well for both parties.

And the website even begins to eat itself - we have components on the site that are powered by our API to give us related content links, and to match Guardian reviews with the right books and music artists on our arts pages.

Where the API really simplifies things from a content structure point of view is that at any moment, when someone is proposing changes to the structure or content of the website, you have to ask the question “How would that manifest itself in the API”. The API forces us to work hard to continue to keep our content model correct, and to separate content from presentation.

See also: “From Publisher to Platform: 14 ways to get benefits from social media” by Mike Bracken on the Guardian Developer blog

Six tips for emerging “Content Strategy”

I’ve found the talks at the “Content Strategy Forum” to be really invigourating, and Karen McGrane’s in particular has inspired me to rip up some of my forthcoming plans in order to concentrate more on content management. Her talk also caused me to ditch a load of slides from this talk to focus on our tags and API instead.

But what I wanted to do most of all was to try and pass on some tips to new and emerging content strategists from having quite a few years in the field of working in digital media companies. There are six in all...

1. Don’t be patronising

OK, so it might be slightly patronising to say that it itself, but I think it is key to successfully approaching a more traditional publisher. I know from experience as an IA that taking an attitude of “You are doing it all wrong, and to solve your problems you need the ninja powers of a digital discipline you’ve never heard of” is not a way to win friends and influence people. Remember that everybody and every organisation has to start somewhere, and that the pace of digital disruption has been uneven. The chances are that a lot of your content strategy skills come from observations of people doing things badly, not because your knowledge of the field came fully formed. Give other people the chance to learn and improve.

2. Build a portfolio of problems

Talking of gaining experience from where others have gone wrong, you should be building a portfolio of problems that you see people experiencing with their digital content. When you are selling the value of content strategy to a traditional business, don’t show them what they are doing wrong, show them what other companies in their vertical are doing wrong with their content. Use that to sell your services not just as a corrective or remedial measure to some analogue content practices, but as a way of gaining competitive advantage.

3. Steer clear of the articles (to start with)

One of the problems with the discipline name of “content strategy” is that if you tell people they need more of it, without a knowledge of the field it sounds like you are saying “Hey! Your content sucks, and you don’t even look after it properly”.

Now, this may well be what you are saying on the inside, but on the outside you need more of a winning approach.

Don’t start by attacking the copy on the company’s landing page - start with demonstrating how you could improve the peripheral content. Target error messages, modal dialogues, sign in and registration pages. Tackle the copy the client doesn’t care about, to earn trust and respect before you start messing with the messages that people are going to be closely attached to. Most authors hate being edited by an editor, let alone by someone whose job title they may never have heard before...

4. Never, ever, ever, ever use “hipster ipsum”

In fact, never use dummy content at all.

The easiest way to distinguish yourself and your craft from the hordes of UX and design people who “also do content strategy” will be to relentlessly focus on copy and content and governance. Leave the fancy-schmancy tools to generate “ironic” and “edgy” greeking to them.

5. Don’t forget your IA

On the first day of the conference I heard a lot about navigation and labels and tasks. I’ve been hearing a lot about that at every conference I’ve ever attended. Don’t lose sight of the fact that there is a lot of prior art out there. Sure, it can be improved, and viewed with a more content-focused eye, but you don’t need to erect your own scaffolding. The content strategy discipline has a unique opportunity to discover new talent and new thought leaders, but trust me, nobody comes out the other side of reading “the polar bear” book knowing less about how to make great web content experiences.

6. Build APIs

Well, maybe not actually do the building - that can be quite hard - but at least champion APIs. Having an API forces a business to think long and hard about the representation of content in a structured way. Get the content model right for an API once, and you can really live the dream of separating content from presentation and “create once, publish everywhere”.

The future

I usually finish my talks with a starry-eyed technophile ode to the future. Some people argue that the future turned out to be rubbish because we didn’t get jet-packs and hovercars.

Nonsense.

The future turned out brilliant.

I can sit in bed watching the telly on a touchscreen computer, with the pictures being beamed up from a small box in the corner of the room downstairs connected to the phone line. Who wouldn’t have been impressed with that in 90s? Let alone when I grew up in the *cough* 1860s or whenever it was...

And it is difficult to predict what the future of devices will be. Five years ago the iPad still seemed like something from a science-fiction future. In five years time I assume devices will be smaller, more powerful, more connected, and doing things that I cannot imagine. That makes it incredibly exciting to work with digital technology.

But the driving force for the consumption on those devices will still be content. People love stories, videos, audio, gossip, news, talking to each other and playing. All of those things rely on presenting them with content in an optimum way. I think the content strategy discipline that is emerging as a field in its own right can look forward to being at the forefront of creating great content experiences, and solving content problems. With that work, the future will carry on being brilliant.

Thanks, acknowledgements, disclaimer, the usuals

I should first point out that this is my personal blog. The views expressed are my own, and do not reflect the views of Guardian News and Media Limited, or any current or former employers or clients. You can read my blogging principles.

Whenever I write about the Guardian’s CMS, API or tagging model, I am truly standing on the shoulders of giants and being the public face of a lot of hard work by some very talented people - too numerous to name them all but I would single out Mat Wall, Swells, Tackers, MOB, MBS, Matt McAlister, Peter Martin and Chris Moran.

Finally, a big thank you to the organisers of the Content Strategy Forum, Jonathan Kahn, Randall Snare and Destry Wion for inviting me to speak.

This is one of a series of blog posts written at the Content Strategy Forum 2011 in London:

Download all the blog posts in one PDF or in epub format for iBooks

“How the Guardian’s custom CMS & API helped take content strategy to a traditional publisher” - Martin Belam

Gerry McGovern, Melissa Rach and Margot Bloomstein at Content Strategy Forum 2011

“CMS - the software UX forgot” - Karen McGrane

Lisa Welchman and Eric Reiss at Content Strategy Forum 2011

“Making sense of the (new) new content landscape” - Erin Kissane

“Agile and content strategy” - Lisa Moore

“Measurement, not fairy tales” - Catherine Toole

“Topic maps, disambiguation, and multi-disciplinary teams” - Elizabeth McGuane

You might also be interested in these notes on these talks from the August London Content Strategy meet-up:

Lisa Welchman, Sophie Dennis and Tyler Tate

So you're relying on tags being done correctly and in the same way all the time by everyone who works for GMG - um given the basic errors GMG writers seem to make every day (managing to misspell Gandhi was one recent howler) thats a big ask.

Have you looked at real undirected ML to generate clusters? Looks like you have it in solr allready so geting it into a sutible word vector format and then punting it through some suitble algorithem should not be that hard.